In this article:

So you wanna know a bit more about the WhiteWinterWolf?

Well, for know I’m a just self learner :). I’ve just passed a set of certifications, focusing back to my initial passions and the occasion to both expand and better organize an otherwise mainly autodidact knowledge. I also created this website, avoiding my notes and projects to decay abandoned in the depths of my hard disk.

But before that I worked for seven years in the global support team of a large software development and IT services company named Axway, providing “tools for enterprise software, Enterprise Application Integration, business activity monitoring, business analytics, mobile application development and web API management” as Wikipedia words it. I was mainly involved in the data transfer and authentication framework solutions.

Maybe you’ve heard of cases of banks debiting or transferring money twice, of

large stores getting their card payment system down nation-wide right in the

middle of a business day, of governments unable to pay allowances due to IT

outages.

These are some examples of situations I handled there.

Maybe you’ve heard of cases of banks debiting or transferring money twice, of

large stores getting their card payment system down nation-wide right in the

middle of a business day, of governments unable to pay allowances due to IT

outages.

These are some examples of situations I handled there.

Of course, things won’t get so bad every day. In fact, due to the interests at play, a major part of our work was to act pro-actively by closely following and wisely advising our customers.

I applied vulnerability assessment practices to the realm of health checks, building the tools and processes to collect, analyze and provide actionable reports on customers’ production environments. I also wrote numerous knowledge base articles and was the main author of a technical newsletter distributed to major banking customers,

For several years, I wear in addition the hat of team leader of the Financial Exchange support team. For me, this role was like “helping others to better help people”. It was a very rewarding experience.

People often forget that there are humans behind software and often don’t realize the importance, maybe the requirement of having people feeling well to get software and services done well. So many technical issues actually find their roots in human issues: inability to communicate, to understand each other, to find a suitable compromise.

I believe in openness, information sharing and mutual comprehension (or at least tolerance). Diversity is what makes any social group rich, no matter if we are talking about a company, a project team, an association or even a family, but diversity never goes without a certain amount of friction.

Being able to go beyond these frictions the right way is what allows a group to move forward as a whole.

Old memories

As I said above, I often highlight that even though we are working with machines, we are not machines ourselves. We are the result of our own history, and I always find interesting to know what makes people what they are now, what lead them to be here right now, doing the exact thing they are currently doing.

So let me share with you a bit of my own path…

Pandora’s box

My youth was clocked by regular viewings of the movie D.A.R.Y.L..

For those who don’t know it, it is a somewhat similar plot than Spielberg’s A.I.,

with a robot child animated by advanced artificial intelligence and the

question about the distinction between sentient beings and such machines.

My youth was clocked by regular viewings of the movie D.A.R.Y.L..

For those who don’t know it, it is a somewhat similar plot than Spielberg’s A.I.,

with a robot child animated by advanced artificial intelligence and the

question about the distinction between sentient beings and such machines.

Add to this a few things about computer security (a child who understand how computers “reason” and is able to apparently bend them to his will), the difficulty sometimes for some youngsters to get along with others who may not share their same vision of the world and the relationship issues this may raise both with comrades and grown ups1.

Then the first real computer entered my home. It was a second-hand Pentium 120, equipped with 32 MB RAM and running Windows 98. Being left two minutes alone with the computer, my mother was still in the courtyard saying goodbye to the guy who provided us the machine, that I rendered the machine “unusable”. At that time, changing the display resolution was indeed a risky task!

“Keep calm and carry on” as the saying goes, I found myself navigating blindly through the menus and options as I remembered them while checking my mother’s location outside through the window and finally managed to get the computer up-and-running again just in time. What a relief!

This little story just to tell how I very quickly experienced that computers were, in fact, nothing more than a stupid stack of metal and silicon, ready both for the best and the worse without any discrimination, and that calm and perseverance (stubbornness?) are usually your best allies to escape from the most cumbersome situations.

Down the rabbit hole

Eventually I managed to spare enough money to buy my first own shiny new

computer: 433 MHz and 64 MB RAM, quickly extended to 128 MB which was far than

enough at that time.

But, more importantly, I got the surprise to get real user’s guide with this

computer2.

Eventually I managed to spare enough money to buy my first own shiny new

computer: 433 MHz and 64 MB RAM, quickly extended to 128 MB which was far than

enough at that time.

But, more importantly, I got the surprise to get real user’s guide with this

computer2.

I never really delved into the first part on software usage as it was mostly already known material, but I passed countless hours studying the hardware part: hundreds of page about computer architecture, standards, buses, chips, connectors, devices, interoperability and troubleshooting.

A hidden world was unveiling itself, at last!

Note

The reader must notice that all this occurred in world which was well different than our current post-2000 era.

That was a time without Internet (yes, there was a time people used computers without Internet access!), cellphones, or anything like that. Quality information was a lot harder to obtain than it is now.

As people around me used to say: I was not playing on the computer, I was playing with the computer. And the more I learned, the more I wanted to learn.

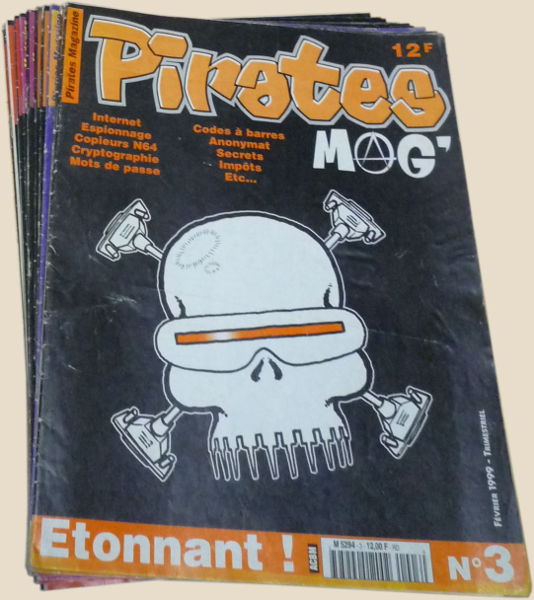

Some day, at some newsstand, chance led me to stumble on one of the first

issues of the Pirates Mag’ magazine.

It looked very different than the usual computer magazine which flourished at

that time, with their flashy colors and their CD-ROM filled with shareware

software.

This one had fewer pages, very few colors, no CD-ROM and no bright

advertisements, and the content seemed… odd: ACK-storms, NetBIOS scanning, cryptography,

privacy and software vulnerabilities.

Hmmm, do all these things really exist?

I need to know more on these things.

Some day, at some newsstand, chance led me to stumble on one of the first

issues of the Pirates Mag’ magazine.

It looked very different than the usual computer magazine which flourished at

that time, with their flashy colors and their CD-ROM filled with shareware

software.

This one had fewer pages, very few colors, no CD-ROM and no bright

advertisements, and the content seemed… odd: ACK-storms, NetBIOS scanning, cryptography,

privacy and software vulnerabilities.

Hmmm, do all these things really exist?

I need to know more on these things.

Then the Internet slowly democratized itself, first with very limited plans offering 30 minutes per month. Yes, France was a little late about the Internet, trying too hard to sell their Minitel to the rest of the world ;). But at some point AOL came and crushed the market with an offer allowing 20 hours per month, opening the possibility to do some real Internet searches right from the comfort of your home.

At that time Google was in its infancy, complex and not very efficient. People used to rely on thematic directories, the Links sections of like-minded websites and webrings to find new addresses.

It was not long ago before I started to stumble on “cracking” blogs. “Cracking” did no had then the same negative and malicious meaning it has now. It was a term merely applied to software security as opposed to hacking was which more associated to network security. These may very well be odd and wrong definitions, but it was just like that at that time: cracking for the software, hacking for the networks, phreaking for phone lines and their colorful boxes, carding for card-related things ranging from magstripes to smartcards.

Most of these “cracking” websites were handled by benevolent people studying and sharing information on how software work at a low level: organization of an executable as a file and in memory, the mapping between source code and assembly, various obfuscation and packing techniques with their strengths and weaknesses, techniques to control the execution flow.

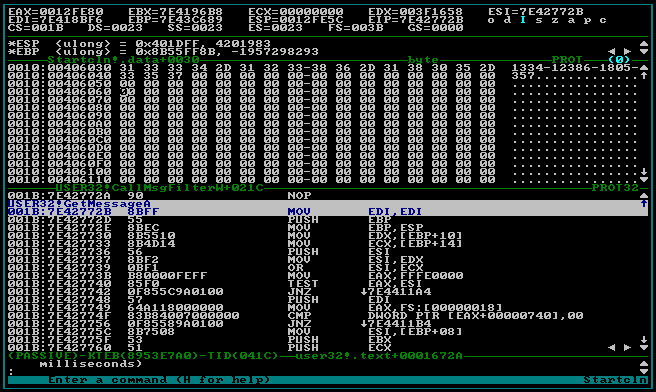

In addition to W32Dasm, Hiew, and other mythical tools, these websites

introduced me to the realm of SoftICE, a ring-0 debugger.

My sweet spot.

In addition to W32Dasm, Hiew, and other mythical tools, these websites

introduced me to the realm of SoftICE, a ring-0 debugger.

My sweet spot.

Unlike user-mode debuggers such as W32Dasm3, this ones loads itself before Windows and runs in background over the whole operating system. It provides a complete control of the computer’s execution flow, memory and CPU registers values. In other words, it opens an highway to the inner side of what the computer “thinks”, allowing you to scroll any software instruction by instruction and wander throughout its code like in a hallway, entering into functions as you would do in rooms, peeking in memory as in drawers.

The Matrix was still in all heads at that time, and it really gave a similar feeling. Wanna become invulnerable? What about skipping the function in charge of decreasing your life when you get hit? Meh, the same function is also used to decrease enemies’ life when you hit them and we are all invulnerable now! How about refactoring a bit the function displaying your life-bar to set it to refill your life instead? Great, it works better! And now, what about the ammunitions? And the current location? The disabled abilities? How about setting all those tweaks optional?4

By the time other people end the game, I was still level 1, learning and experimenting all the “and what if…?” crossing my mind. I was playing with games the same way I was playing with computers: not exactly the intended way ;).

University studies

Maths is everywhere!

So knowing maths means you know everything, doesn’t it? At least that’s what a lot people seem to think…

I really never understood the importance given to mathematic studies, at least in the French educational system. The ability of a student to imbibe and regurgitate abstract tables and theorems is often taken as a measurement of his intelligence, a thing I always found wrong and unfair.

Sadly, at that time, computer security was mathematics. In fact, at that time, in (French, at least) academic circles computer security was a mere synonym of cryptography, and studying computer security meant passing about 80% of your time studying the mathematic principles underlying cryptographic algorithms from an abstract point-of-view.

Practical application was considered secondary, so the remaining 20% was deem sufficient to cover all the rest, from hardware to network topologies through software and communication protocols and the design, development, implementation and testing methodologies related to each of these domain.

I always thought this was just nonsense. Being a good cryptographer doesn’t automatically makes you a good sysadmin, pentester or auditor. Fortunately, as per my understanding, things have evolved now and a student willing to learn about practical IT security has ways to do so and take most advantages of his inclinations.

“IT security professional”? Get a real job instead!

The lack of interest in practical IT security in academic circles was in fact just mirroring the lack if interest for this matter in the professional world at a whole.

At that time, if you wanted to work in IT security, you had three main career path:

-

Join some underground black hat group and engage yourself in some downright illegal business. This is the best way to go to jail5.

-

Become an independent security researcher. These people were considered as threats just like black hat people.

This was demonstrated by the conviction of Serge Humpich after he demonstrated6 the weakness of the French smartcard payment system, and reinforced by the conviction of Guillermito who proved that the claim that a certain anti-virus software was able to protect against “all known and unknown viruses” was wrong (this case led Bruce Schneier to write: “France Makes Finding Security Bugs Illegal”).

-

Work in the IT security department of some company. IT security was badly perceived in the corporate world as costing money and creating hindrances without any obvious return on investment. Working in the IT department therefore means working with no budget, unable to do the work you are meant to do, and being held responsible and fired in case of a security event7.

Don’t even expect the conditions to be better for your successor since, at that time, a security incident was perceived merely as a public relations issue and not a technical one, so the budget increase would go in priority to the PR and marketing departments, the IT security department getting a few remaining crumbs at best.

My first (unofficial) pentest report

I therefore chose to focus on network and system architectures studies which was the closest match to my centers of interests. Maths still played a large role, allowing students who weren’t good at computing but good at maths to get IT engineer diplomas, but at least the reverse was also true and most of the curriculum was in fact about computer science and I really enjoyed most of it.

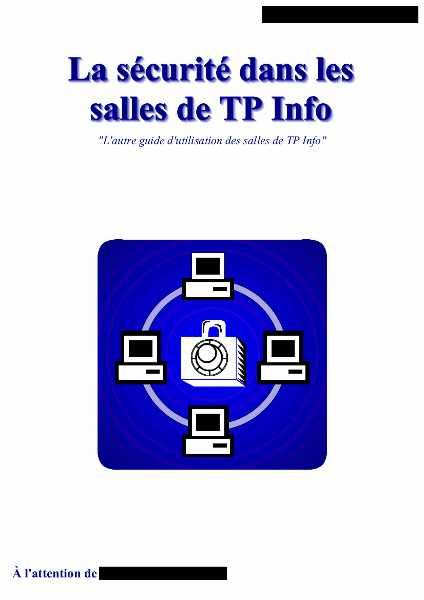

Opening a session on the university lab rooms computers was my first direct interaction with a well structured medium-sized LAN, involving network segmentation, network services, shared resources, policies, etc.

It didn’t took me long before my “and what if…?” surfaced here too an lead me to do just a little test here or confirm a theory there. Slowly, as my understanding of the involved systems and networks progressed, my tests became more and more advanced and organized until eventually… I wrote a clean report and handed it in person to the University’s system administrator.

From the hardware to the network, through client and server systems and including the development of ad-hoc tools, it detailed all my researches and findings, everything well sorted with step-by-step procedures to reproduce each issues accompanied mitigation suggestions.

This report started with an introduction stating my motives, here is a translated excerpt:

I started with a very simple observation: if I ever want to have a chance to play at “bypassing” network security systems, I have to do it now, while still at the University, as the teaching staff’s reaction won’t be comparable to the one from a webmaster on the Internet or the manager of a company hiring my services. […]

When entering the University, I had no knowledge of network security and this “game” was, for me, a huge learning occasion. […]

I hope that you will understand that this study was for me, above anything else, the occasion to learn a lot on a domain which was unknown to me until now and which is only vaguely covered in the curriculum while at the same time seeming really passionating: network security.

I took all the precautions possible to both ensure that no part of this report could leak and that it was clear that I had no personal advantage whatsoever in making it, except learning and the feel of maybe doing something useful.

To my relief, the report was well received. I also had the chance to do my internship with this system administrator, discovering the other side of the mirror. Among other tasks, I checked the security of a quota-enabled printing server he planned to use the following year, it didn’t took me long to figure out how to charge printings to other accounts. I attended the call to the company providing this server, I still feel sorry for the support guy having to acknowledge that, no, they have no workaround nor solution available to the issue he was raising.

Warning

For the youngsters reading this, I do not incite nor encourage you to follow the same path, it’s actually the opposite. As I stated earlier, this was a different time where information was hard to obtain, there was no usable virtualization software and IT security was a neglected, if not negatively perceived domain.

Don’t expect the same outcome in todays world.

You want to practice? Head to the lab section, download vulnerable environments, participate to CTF events or tryout their published challenges, apply for bug bounties.

Within your University you may also build a club, either independently or with the support another organization such as a local hacklab, spread security and privacy good practices, organize cryptoparties, etc.

There are just so many ways to develop your knowledge nowadays: it really doesn’t worth entering in any gray area.

The workload of the rest of my scholarship didn’t left me much time for any further personal research, however I tried to pull my work toward IT security each time there was projects topics to choose from (and other students were more than happy to leave them to me ;) ).

Switch to the *nix world

From Windows to Mandrake Linux

At that time I was already using free software! But it was “free” more like “fell of he truck” than “free beer” or even “free speech”.

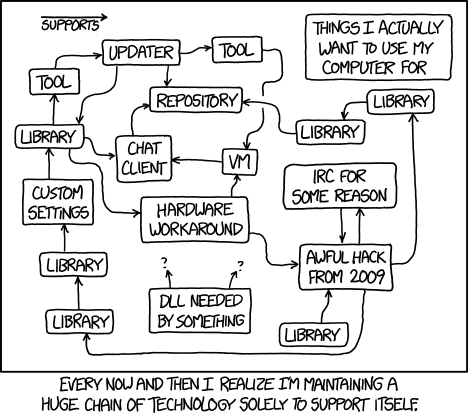

Years of experiments added layers of tools monitoring onto each other, either as legitimate security features or as a way to bypass things: avoid call-home features, forge time API replies, unpack and patch binaries and memory, avoid the detection of shady tools and techniques, etc.

One day I changed a firewall rule my shareware screen saver suddenly stopped working (it managed to call home). I realized absurdity of this setup and decided to throw everything away and switched to Linux as my main operating system, in particular Mandrake Linux.

At that time Mandrake Linux was available in cultural goods retail stores as

boxes, with a generous user’s guide, several CD-ROMs and a lot of

friendliness8.

While Linux was for a long time reserved to server environments Mandrake was

one of the most active distribution to push it to end-users workstations.

It was in particular the distribution with the best out-of-the-box devices

support9.

At that time Mandrake Linux was available in cultural goods retail stores as

boxes, with a generous user’s guide, several CD-ROMs and a lot of

friendliness8.

While Linux was for a long time reserved to server environments Mandrake was

one of the most active distribution to push it to end-users workstations.

It was in particular the distribution with the best out-of-the-box devices

support9.

Mandrake Linux ease-of-use associated to the progress made by the Wine project becoming able to handle even large and complex applications such as the Microsoft Office10 suite on Linux removed any real need of using Windows anymore:

-

You get a full-featured workstation for free, as in “free speech”.

-

No need to maintain all these layers of software watching each other, freeing up computer resources to do actual work.

-

Software for anything you could imagine is at your disposal, at no additional cost and with no download time: insert the install disc, say what you want and you have it ready for you.

-

Curiosity is encouraged: no reverse-engineering needed to investigate how a software works or alter its behavior, just get the source code or reach the developers on a mailing list.

By the way it was about that time that IBM launched a series of TV ads including this famous one on Linux which I recommend you to watch if you don’t already know it. It convey this feeling in a great way!

From Mandrake Linux to the rest of the FOSS world…

The open nature of Linux lead me to the BSD world, setting up an OpenBSD as home router (since at that time Internet modems were plain modems, not routers11), setting up Debian and FreeBSD as servers (once you have tasted to jailed services, it’s difficult to go back!).

Then another one took the challenge of pushing Linux to people’s desktops, a guy who

became multimillionaire after having sold his company and, after having offered

himself a trip into space, instead of going for a

“blowout yachts and blondes situation” as he said he chose to

create a new Linux distribution with its famous bug #1:

“Microsoft has a majority market share”.

Then another one took the challenge of pushing Linux to people’s desktops, a guy who

became multimillionaire after having sold his company and, after having offered

himself a trip into space, instead of going for a

“blowout yachts and blondes situation” as he said he chose to

create a new Linux distribution with its famous bug #1:

“Microsoft has a majority market share”.

Ubuntu was born.

They went as far as sending Ubuntu discs for free of charge (including shipping) to anyone asking, anywhere in the world. You just had to fill a public form on their website, filling your name, address, how many discs you would like, and a few weeks later you indeed receive them. This was just something never seen before.

I used Xubuntu, the XFCE-based Ubuntu flavor, for several years until I got

angry at it when a standard update broke the X server (plus a few

other grievances accumulating over time).

After a few time passed with Xandros12 and then eLive13, I

finally settled on openSUSE for the next upcoming years as this system

just worked out-of-the-box, getting the job done whatever situation you encounter.

I used Xubuntu, the XFCE-based Ubuntu flavor, for several years until I got

angry at it when a standard update broke the X server (plus a few

other grievances accumulating over time).

After a few time passed with Xandros12 and then eLive13, I

finally settled on openSUSE for the next upcoming years as this system

just worked out-of-the-box, getting the job done whatever situation you encounter.

Now I’m using Qubes OS on my main machine for a few years as I’m a strong

proponent of security through isolation (my background of using

FreeBSD jail most probably ;) ), and still use openSUSE on secondary ones but

the list of openSUSE grievances growing too fast lately I’m in the process

of migrating away14.

Now I’m using Qubes OS on my main machine for a few years as I’m a strong

proponent of security through isolation (my background of using

FreeBSD jail most probably ;) ), and still use openSUSE on secondary ones but

the list of openSUSE grievances growing too fast lately I’m in the process

of migrating away14.

… And to proprietary Unixes

In addition of these open-source operating systems, my work at Axway was also the occasion to use various commercial Unixes on a daily basis (I also passed some time on a few Windows servers but that’s boring and not worth mentioning ;) ):

-

HP-UX, the Unix from Hewlett Packard running on Itanium systems.

This system is just awkward.

Useful to test scripts in the worst condition, but awkward when you just

need some work to be done.

HP-UX, the Unix from Hewlett Packard running on Itanium systems.

This system is just awkward.

Useful to test scripts in the worst condition, but awkward when you just

need some work to be done.For instance it is the only modern I know where the

@character is still interpreted as a kill character by default, canceling whatever you were doing when this character was encountered: logging-in, connecting to a server, etc. It is also the only system where writing reliable shell scripts seems just an impossible task as, in addition to HP-UX oddities, the fact is that the commands of two systems running the same version of HP-UX may propose incompatible sets of arguments. I never encountered such situation on any other environment. -

AIX, the Unix from IBM running on their PPC processors, is a good

illustration of a product made by a company considering it is

cheaper to deliver a buggy product and massively invest in support

and maintenance to fix the part actually used by the customers than

delivering a good quality product in the first place (see

my article on this approach, shared by a lot of large companies).

AIX, the Unix from IBM running on their PPC processors, is a good

illustration of a product made by a company considering it is

cheaper to deliver a buggy product and massively invest in support

and maintenance to fix the part actually used by the customers than

delivering a good quality product in the first place (see

my article on this approach, shared by a lot of large companies).The fact is that AIX is the single and only one system where I encountered a reproducible bug affecting the

cpcommand15, and again the single and only one system where I got a Segmentation fault when opening a small and basic configuration file withvi16. For the rest it works fine and is used by major companies, but I cannot keep from worrying when seeing that such basic, fundamental bricks are still not right after all those years (the code ofcpandvishould be pretty much historical I guess). -

Solaris, from Sun (before Oracle bought them) running on SPARC machines, was

by far my most preferred of all the proprietary Unixes, and my usual go-to

environment for a lot of work there.

Not only it behaved as I would expect from a *nix environment, but even-more

several technologies (like ZFS or DTrace) initially developed for this

system made their way into FOSS platforms.

Solaris, from Sun (before Oracle bought them) running on SPARC machines, was

by far my most preferred of all the proprietary Unixes, and my usual go-to

environment for a lot of work there.

Not only it behaved as I would expect from a *nix environment, but even-more

several technologies (like ZFS or DTrace) initially developed for this

system made their way into FOSS platforms.I think I have encountered some posts in blogs and forums letting me think that, while I was familiar with the user-side of the system, the administrator-side however was far less pleasant, but I have no first-hand knowledge about this and it may very well be unjustified rants.

The main issue with this system is that, while its story was taking an even more interesting direction with Sun opening its source-code, Oracle then bought Sun as a whole and Solaris is now pretty much reduced to its historical significance.

Note

In these comments I focus only on the software part the equation. The main value of commercial *nixes compared to the free ones doesn’t lie in the software. Their main advantage is that your whole server: its hardware, its operating system, and in some case up to the software and services it runs, everything comes from the same provider.

This obviously saves time (and nerves) when you know that you won’t have different entities blaming each other for an issue, arguing for ages whether the defect is at the hardware, OS or application level and trying to drop on the other the responsibility to provide a fix.

-

For those who haven’t, I strongly recommend reading the Hacker’s Manifesto. I discovered this document only years later, but it describes this world and the associated feelings very well. ↩

-

I have always been bothered by the fact that the most basic coffee machine (those old ones with a simple on-off button button) were always coming with an extensive manual explaining what to do and not to do with your newly acquired device, while a computer or any other advanced digital device was just provided as-is, up to the user to grasp all-the-things by himself. This is just a race to the bottom. ↩

-

Or OllyDbg to take a current reference. ↩

-

Personally I was doing all these things just for fun and the learning experience. And one time as a kind of personal “revenge” because the end of Baldur’s Gate 1 really sucks ;). At that time, at most you could publish your work as a trainer and get some relative fame, but it wouldn’t really go any further. Now times have changed, and MMORPGs opened a huge market for such kind of activity, offering real money and real trouble. Josh Phillips and Mike Donnelly gave an interesting talk about this at DEFCON 19. ↩

-

Moreover, unlike the romanticized image, this was not necessarily even interesting nor fun as a lot (most?) of these groups just categorized their staff depending on their current abilities and people would do the same thing all day long without ever learning something new. Just some kind of poorly managed remote desk job with the jail as perspective. ↩

-

It must be highlighted that he did his best to stay within the bond of the law, being accompanied by lawyer during the law process, but even that didn’t help. ↩

-

Things are moving slowly but they are moving, even if there still remains a lot to do as highlighted by Tony Robinson. ↩

-

Current Linux distributions are far more neutral and impersonal compared to old Mandrake Linux editions (before the 9). There were pun on words, jokes and exclamations really giving you the feeling of being accompanied in your Linux journey and adding some fun to your activity, while the general tone of current ones is more suitable to reach corporate audience. This was a time where Clippy was squatting a corner of your screen while writing your reports and Microsoft tried citrus hues to decorate his Windows XP. ↩

-

In particular the graphic and audio card. Do you remember these series of questions out of nowhere that the X Server configuration utility used to ask, preceded by a warning stating that a wrong answer to any of them may destruct your screen? And I don’t even mention the unsolvable dependency issues when trying a compile something. At that time the Linux world was not very engaging for everyday usage… ↩

-

At that time Open Office wasn’t exactly a viable alternative compared to the quality of Microsoft’s Office Suite. Now Libre Office perfectly fills most needs and I often recommend it to both Linux and Windows users alike, while at the same time Microsoft turned his suite into a barely usable piece of software. It’s always strange of things evolve! ↩

-

Even now, the ISP provided router being part of his own infrastructure, I prefer to use a dedicated router I control as the boundary of my LAN. ↩

-

Xandros pursued the goal to help Windows user to transition to Linux, notably through a special integration of CrossOver Office (a commercial version of Wine offering more cutting-edge features). As-far-as-I remember they maintained their own software library which was very limited when compared to Debian’s one (as used by Ubuntu). ↩

-

This is a still active distribution (note to myself: check it back when I have some time) focused on proposing the best eye-candy with the highest performances. And it is true that in these two aspects it always remained unrivaled, even by well-founded alternatives. However when I tested it (the 2.0 version from a dozen years ago) it lacked configuration wizards, and while I like the command-line I also like when the system has enough brain to do high-level things such as joining WiFi network without having to me to manually process each step. ↩

-

To Debian most probably, now that there isn’t a new hardware bus standard popping-up every year they managed to catch-up and don’t suffer from hardware support limitations as they used to while still providing the same reliability. ↩

-

The algorithm checking if one of the sources is a subdirectory of the destination is buggy, making

cpentering an infinite loop and filling up a whole partition. ↩ -

This one happened only once, but still, never got such a failure from

viin nearly two decades of other *nixes. ↩